Small script libraries often carry more risk than teams admit. They sit quietly inside build steps, data jobs, deployment paths, admin tasks, test helpers, and reporting routines until one weak assumption turns a harmless helper into a production headache.

That is why validating script libraries deserves more attention than most teams give it. A script may be short, but once several projects depend on it, the blast radius grows fast. Teams that publish updates through internal package registries, shared repositories, or release notes also need a clear communication trail, and platforms built for public release visibility show why structured messaging matters when technical changes affect many people. The same thinking applies inside engineering groups: people need to know what changed, why it changed, and how safely it was checked.

Good validation is not about slowing developers down. It is about removing the kind of uncertainty that makes every small change feel risky. When a team treats shared scripts as living software instead of disposable glue, those scripts stop being hidden liabilities and start becoming dependable building blocks.

Why Shared Scripts Become Riskier Than They Look

A shared script earns trust long before anyone proves it deserves trust. One developer writes it to solve a narrow problem, another copies it into a different workflow, and a third turns it into a small library because everyone keeps asking for it. That path feels natural, but it also hides the moment when a convenience tool becomes a dependency.

Script library testing reveals hidden assumptions

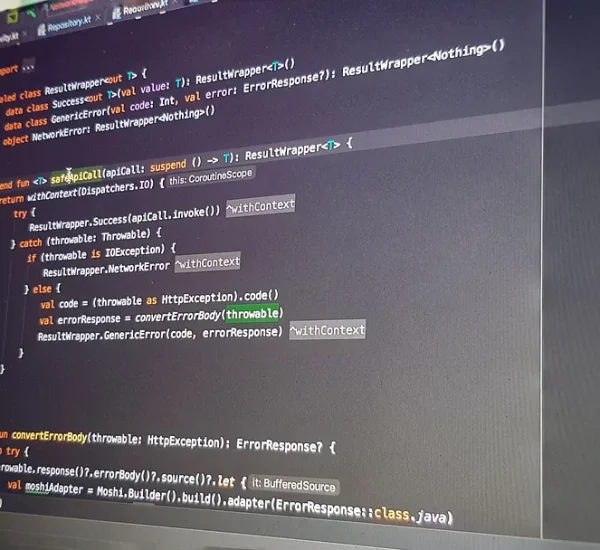

Script library testing often exposes the quiet rules that nobody wrote down. A function may expect file paths to follow one naming pattern, API responses to include one field, or timestamps to arrive in one timezone. Those assumptions stay invisible while the original use case keeps working.

The trouble starts when another team uses the same helper in a different setting. A finance reporting job may pass larger files than the script has ever handled. A deployment task may run on a Linux runner instead of a developer’s laptop. A data cleanup script may receive blank values that the first project never produced. The code did not change, but the environment did.

A good validation process treats those assumptions like load-bearing walls. Before anyone expands the building, the team checks what is holding it up. That may sound cautious, but it saves teams from the worst kind of bug: the one that only appears after people have trusted the tool for months.

Shared code quality depends on boring details

Shared code quality does not come from clever syntax. It comes from boring details that stay boring under pressure. Naming, input checks, error messages, version notes, and fallback behavior matter more than elegant one-liners once several teams depend on the same script.

A small example makes this clear. A script that deletes temporary files may work fine when every folder name is predictable. Without strict path checks, though, one odd variable can point the cleanup process at the wrong directory. The script still “works,” but it works on the wrong target. That is not a dramatic coding failure. It is a validation failure.

Teams often want speed, so they skip the dull checks. That bargain looks cheap at first. Later, someone spends half a day proving that a failed job came from a missing default value in a helper function written two quarters ago. The work always gets paid for. Validation decides whether you pay early in minutes or later in panic.

A Practical Standard for Validating Script Libraries

Teams do not need a heavy process to make shared scripts safer. They need a standard that is clear enough to repeat and light enough that developers will follow it on normal days, not only after an incident. The best standard answers three questions: what can this script receive, what can it change, and how does it fail?

Reliable script validation starts before testing

Reliable script validation begins when the team defines the contract around the library. A contract does not need legal language or a giant design document. It means the script has stated inputs, outputs, side effects, and limits. Without that, tests become guesswork dressed as confidence.

For example, a script that formats customer records should state whether it accepts partial records, duplicate IDs, blank emails, or non-English characters. A deployment helper should state whether it can retry safely, whether it writes to disk, and whether it touches external services. These details keep developers from treating behavior as magic.

The counterintuitive part is that validation can make scripts easier to change. Teams often fear rules because they think rules freeze code in place. Good contracts do the opposite. They tell developers which parts must stay stable and which parts can move. That freedom matters when a library grows beyond its first use case.

Dependency review protects the script from outside surprises

Dependency review matters even when the library itself looks clean. Many script libraries rely on shell tools, runtime versions, environment variables, package modules, credentials, or remote APIs. Any one of those can shift under the team’s feet.

A practical review checks the full chain. Does the script require a specific Node, Python, Bash, or PowerShell version? Does it call tools that may behave differently across operating systems? Does it depend on a package that has not been maintained for years? Does it assume a secret name exists in one environment but not another?

One team I have seen would run a harmless reporting script every Monday. The code barely changed, but one upstream package update changed how dates were parsed. The report looked normal until totals drifted. Nobody blamed the script at first because the script had been “stable.” Stable code on unstable ground is not stable.

How Teams Build Confidence Without Slowing Work

Validation fails when it feels like a gate invented by people far away from the work. Developers will route around a process that only adds friction. They will support a process that catches real problems without turning every small update into a ceremony.

Automated script checks catch repeat mistakes early

Automated script checks should cover the mistakes people make more than once. Input shape, missing files, failed commands, unsafe paths, empty responses, permission errors, and bad exit codes are all strong candidates. These checks do not need to be fancy. They need to run at the right moment.

A small test suite can run when someone opens a pull request. A lint check can catch shell quoting issues before they hit a runner. A dry-run mode can show which files, records, or services the script would change without changing them. That last one is worth its weight in quiet weekends.

The mistake is trying to automate judgment. Automation should handle repeatable checks so people can focus on context. A reviewer should not waste attention counting whether five edge cases still pass. The system should say that. The human should ask whether the new behavior makes sense.

Manual review still catches what tests miss

Manual review has a job that automation cannot replace. It spots risky intent, unclear naming, and surprising side effects. A test can confirm that a cleanup script removes files from one folder. A reviewer can ask why the script is allowed to remove anything without a dry-run flag.

Strong review also protects future readers. Script libraries often become the tools people open during an outage, a release, or a deadline. That is the worst time to decode clever shortcuts. A reviewer who asks for clearer names or better errors is not nitpicking. They are helping the next person think under pressure.

Teams should review scripts through one practical lens: what damage could this cause if called by the wrong person, with the wrong input, at the wrong time? That question cuts through style debates fast. It turns review from personal taste into risk control.

Turning Script Validation Into a Team Habit

The hardest part is not writing tests or adding checks. The hardest part is making validation feel normal. Teams often improve after a painful failure, then slowly drift back to casual habits as the memory fades. Real maturity shows up when nobody needs a disaster to do the right thing.

Versioning makes shared code quality easier to preserve

Versioning gives teams a safe way to change shared code without surprising everyone at once. A script library that changes behavior without a version trail forces every user to absorb risk at the same time. That is reckless, even when the change is well meant.

Simple semantic versioning works well for many teams. Patch releases fix mistakes without changing behavior. Minor releases add safe features. Major releases signal that users need to check their workflows. The exact naming system matters less than the discipline behind it.

Shared code quality improves when releases tell the truth. “Updated helper script” is not a release note. “Changed CSV parser to reject blank account IDs” is useful. People can act on that. They can test their workflow, update inputs, or delay adoption until they are ready.

Ownership keeps reliable script validation alive

Reliable script validation needs owners, not heroes. A hero fixes the broken script after everyone panics. An owner keeps the script understandable, tested, and safe enough that heroics rarely enter the room.

Ownership can be light. One team can own a library, or a rotating maintainer can review changes each month. The point is accountability. Someone must know why the library exists, where it is used, and which changes deserve extra care. Without ownership, shared scripts become abandoned tools with active users.

A healthy habit forms when teams treat scripts as part of the product’s nervous system. They may not appear in the user interface, but they move information, trigger releases, prepare data, and clean up messes. When those small tools fail, the larger system feels it. Validating script libraries is how teams keep those quiet parts of the operation from becoming loud problems.

The next step is simple: choose one shared script library this week, define its inputs and risks, add the checks that would have prevented its last two bugs, and make one person responsible for keeping that standard alive.

Frequently Asked Questions

What is the best way to validate script libraries before release?

Start by defining expected inputs, outputs, side effects, and failure behavior. Then add automated tests for common edge cases, review dependency risks, and run the script in a safe test environment before release. The goal is confidence, not ceremony.

Why does script library testing matter for small teams?

Small teams often share scripts informally, which makes hidden risk easier to miss. Script library testing helps catch broken assumptions before they affect deployments, reports, data tasks, or customer-facing systems. It protects time as much as code.

How often should teams review shared script libraries?

Review shared script libraries whenever behavior changes, dependencies update, or a new workflow starts using them. A light quarterly review also helps catch stale packages, unclear ownership, weak documentation, and risky assumptions that were missed during daily work.

What should be included in reliable script validation?

Reliable script validation should include input checks, output checks, error handling, dependency review, safe test data, dry-run behavior for risky actions, and clear release notes. These pieces help teams trust the script under different conditions.

How can automated script checks reduce production errors?

Automated script checks catch repeat issues before code reaches production. They can detect bad inputs, missing files, unsafe commands, failed dependencies, and unexpected outputs. This gives reviewers more space to focus on intent and risk.

What makes shared code quality hard to maintain?

Shared code quality becomes hard when ownership is unclear, documentation falls behind, and teams copy scripts into new workflows without checking assumptions. The code may still run, but its behavior becomes harder to trust over time.

Should script libraries have version numbers?

Version numbers help users understand change risk. They show whether a release fixes a bug, adds behavior, or changes existing behavior. Without versioning, teams may adopt updates blindly and break workflows that depended on older behavior.

How do teams prevent script libraries from becoming technical debt?

Give each shared library an owner, document its contract, test its risky paths, review dependencies, and remove unused behavior. Technical debt grows when scripts keep gaining responsibilities without structure, so the cure is steady maintenance before problems pile up.